Outsourced Understanding

When everyone can produce the output, what happens to the people who never learned how?

Jerry Seinfeld sat across from Jimmy Fallon and delivered the kind of line that only works because it’s true. Talking about the rise of AI, he said:

“Let’s be honest. Most of the intelligence we’ve encountered in our life: pretty artificial, right?”

The audience laughed. They were supposed to. But the joke does something that most AI commentary doesn’t. It skips past the technology entirely and points the camera back at us. We’ve been performing intelligence for decades. Memorizing facts we don’t understand. Citing frameworks we’ve never applied. Quoting research we’ve never read past the abstract. AI didn’t introduce artificial intelligence into the world. It just automated the version we were already producing.

Which raises a question: if most of what we called understanding was already outsourced, what exactly are we afraid of losing?

Let me park that thought for a moment. Off the coast of Norway, there’s a story about old fish and young fish that might tell us more about the future of human expertise than anything Silicon Valley has published. Stay with me on this one.

What Norwegian Fish Can Teach Us About Forgetting

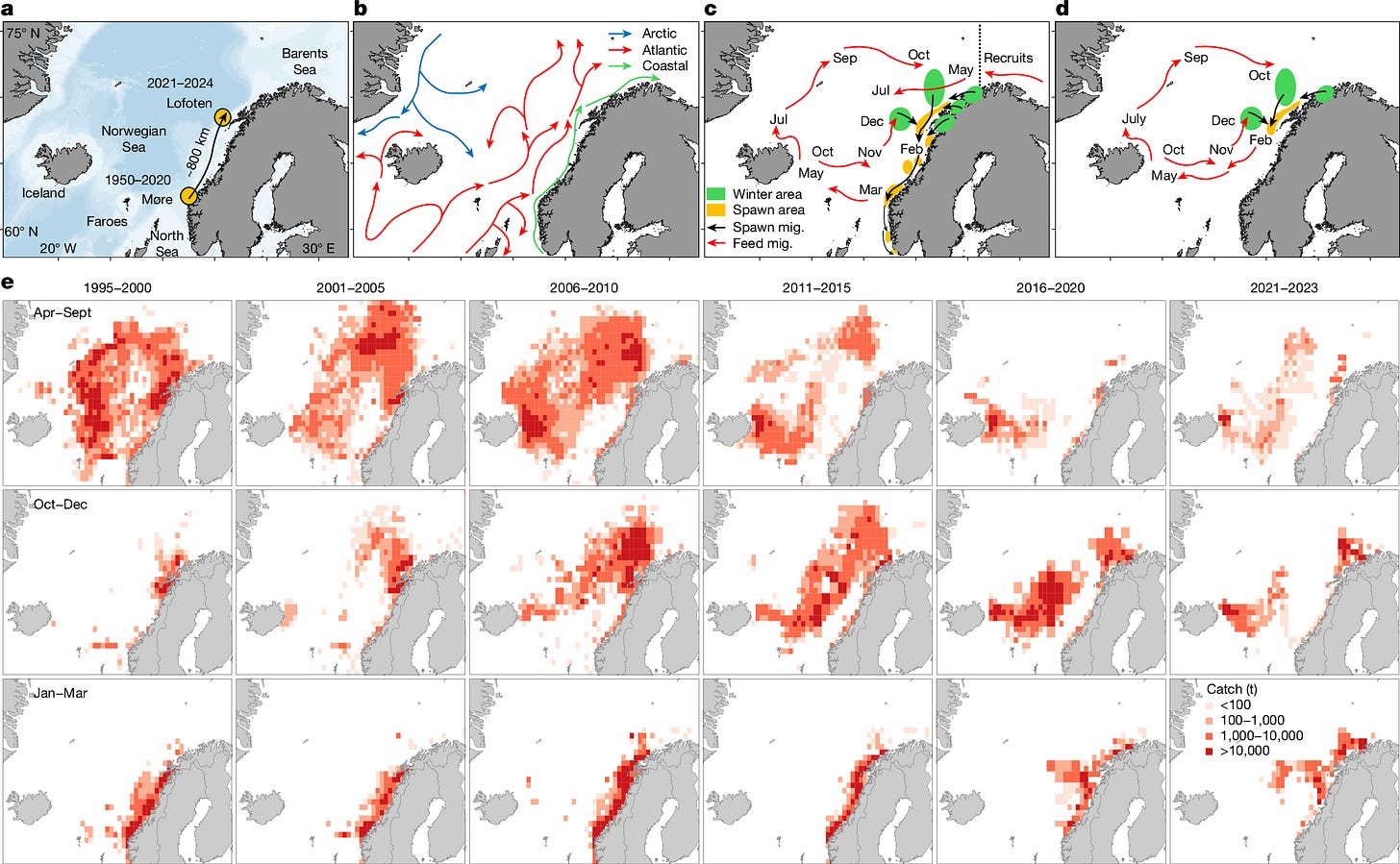

Herring are small, silvery schooling fish. Billions of them move through the North Atlantic in enormous coordinated migrations, following routes that take them from deep winter waters to coastal breeding grounds and back again. They’ve been doing this for centuries. The routes aren’t random. They follow specific currents, temperature gradients, and food sources that the fish have learned to navigate across generations.

Norwegian marine biologists found that after overfishing wiped out the older, experienced herring, the younger fish had to invent their own routes. They chose poorly. Their new paths led them to colder, less hospitable waters where survival rates dropped. In a single generation, centuries of accumulated navigational knowledge vanished.

The older herring didn’t just know where to swim. They understood why those routes worked. Which currents carried food. Which temperatures meant safety. Which timing aligned with breeding conditions. None of this was written anywhere. It lived in the bodies and behaviors of fish who had made the journey, survived it, and come back to lead the next group.

Remove the experienced fish and the knowledge doesn’t transfer. The young fish swim with the same confidence as their predecessors. They just swim in the wrong direction.

Keep this in mind. It will make sense as you go through the rest of the article.

The Two Camps

Back to Seinfeld’s question. There are two camps in this conversation, and they rarely talk to each other.

In the first room, the argument goes like this: if you don’t understand the thing, you can’t get real results. You can produce output, sure. You can generate a passable structural analysis or a coherent legal brief or a marketing strategy that hits the right buzzwords. But you’ll have no way to evaluate whether the output is good, no capacity to catch errors that look correct on the surface, and no ability to adapt when the situation deviates from the template. Understanding isn’t a luxury. It’s the operating system. Without it, you’re assembling furniture without knowing what a load-bearing joint looks like. It holds until it doesn’t. And when it fails, you won’t know why.

In the second room, the argument is simpler and harder to argue with: I don’t care how the stove works. I care that my eggs are scrambled. The person who built the stove understood combustion. I understand breakfast. These are different competencies and always have been. The mechanic understands engines. The commuter understands routes. The electrician understands wiring. The homeowner understands which switch turns on which light. Specialization has always meant that most people use things they don’t understand, and the world works fine. AI just extends this logic. It doesn’t break it.

Both rooms are correct. And neither room is having the conversation that actually matters.

The Thing Hard Things Actually Build

The first room overestimates how many people need to understand physics. The combustion engineers, the thermodynamicists, the people who grasp the mechanics behind everyday systems, they were always a sliver of the population. A small, particular group who found the machinery more interesting than the output. AI doesn’t shrink that sliver. It just makes the sliver more visible by contrast, because now everyone else can produce outputs that used to require years of training.

The second room underestimates what the process of learning hard things actually produces.

A person who ground through four years of physics didn’t just learn Maxwell’s equations. They learned what it feels like to sit with confusion for weeks before clarity arrives. They learned the difference between “I don’t understand this yet” and “I can’t understand this.” They built, through repetition and failure and small breakthroughs that came at 2 AM on a Tuesday, an internal catalog of reference experiences for navigating the unknown.

The same is true across every domain where mastery requires prolonged struggle.

The musician who spent years learning jazz improvisation didn’t just acquire chord voicings. They learned to make decisions in real time under uncertainty, to listen and respond simultaneously, to tolerate being terrible at something for months before the neural pathways caught up with the ambition. The chef who trained in classical technique didn’t just memorize sauces. They learned to sense when something was about to go wrong by smell, by color, by the way a reduction sounded in the pan, and they learned to trust that sensory judgment over the recipe. The lawyer who spent three years buried in case law didn’t just memorize precedent. They learned to hold multiple contradictory interpretations of the same facts in their head at once and assess which one would land with a specific judge in a specific jurisdiction.

None of this is about the content. All of it is about the process. The content was the vehicle. The destination was the internal library that the struggle built.

We can express it this way:

Internal Library = Σ (Difficulty of Challenge × Duration of Struggle × Depth of Resolution)

You read this as: your internal library is the sum of every difficult thing you’ve worked through, weighted by how hard it was, how long you sat with it, and how deeply you resolved it. A shallow resolution after a brief struggle adds almost nothing. A deep resolution after a sustained struggle adds a reference experience you’ll draw on for the rest of your life.

This library has nothing to do with physics or law or cooking. It has everything to do with the capacity to navigate situations that don’t come with instructions.

Why Nobody Wants to Climb Anymore

This decline in appetite for hard learning isn’t theoretical, and it isn’t limited to one country.

The trend is global. In Australia, researchers have been tracking declining enrollment in what they call the “triple sciences,” physics, chemistry, and biology, for over a decade. In Nigeria, studies show that the low popularity of physics at the secondary level is tied directly to students’ perception that the subject is too difficult relative to its career payoff. Across the Gulf states, national assessments consistently show low student interest in STEM careers despite massive government investment in educational reform. In the UK, physics A-level uptake has been stagnant for years. The OECD’s Programme for International Student Assessment, which tests 15-year-olds across 80 countries, shows that math and science performance has been declining globally, not just in the West. And Africa, despite holding roughly 19 percent of the world’s population, produces less than 1 percent of global research output, in part because physics and hard science departments at flagship universities struggle with broken equipment and virtually no funding while other departments manage to continue publishing using desktop research and existing data.

The pipeline into difficult disciplines was thinning everywhere before AI arrived. Now the incoming message to every student on every continent is the same: the output that used to require years of study can be generated in seconds.

Jacquelynne Eccles and Allan Wigfield, two developmental psychologists who spent over four decades at the University of Michigan and the University of Maryland studying why people choose hard things over easy ones, built one of the most cited frameworks in education research to explain exactly this dynamic. Their Situated Expectancy-Value Theory breaks motivation into components, but the two that matter here are straightforward. How confident am I that I can succeed at this? And how much will it be worth if I do?

We can simplify:

Motivation to Learn = Perceived Future Value × Difficulty Tolerance

You read this as: a person’s willingness to endure the pain of hard learning equals how valuable they believe that learning will be, multiplied by their tolerance for difficulty.

This is a multiplication problem. If either variable approaches zero, the whole product collapses. And AI has cratered the first variable. The difficulty tolerance was already low for most people. You don’t need both variables to fall. One is enough.

Self-Understanding Requires a Library You Can’t Download

If we outsource the struggle with external systems to machines, we theoretically free up cognitive bandwidth. No need to grind through thermodynamics. No need to suffer through contract law. The machine handles the domain. You handle... what, exactly?

The optimistic answer is: yourself. Understanding yourself. Your decisions, your patterns, why you keep making the same mistakes in relationships, why you freeze in certain conversations, why you procrastinate on things you care about and power through things you don’t. Self-understanding. The last frontier. The one no machine can navigate for you because the data lives inside your body and your history and your particular wiring.

This would be a wonderful outcome. Outsource the external, go deeper on the internal, become the most self-aware generation in human history.

But self-understanding doesn’t arrive through free time and good intentions. It comes from recognizing patterns. And recognizing patterns requires a library of reference experiences to match against.

When a person who struggled for years to learn an instrument encounters a period of creative stagnation in their career, they have a reference. They know what it felt like to plateau. They know the plateau wasn’t permanent. They know that breaking through required changing their approach, not quitting the instrument. They map the old experience onto the new one, and the mapping gives them both patience and a strategy.

When a person who has never struggled through anything difficult encounters the same stagnation, they have nothing to map it to. The experience is novel, disorienting, and without reference. They don’t know if it’s permanent. They don’t know if pushing through will help or if they should quit. They have no prior data point for “I was stuck and eventually broke through” because they’ve never been stuck on anything long enough to break through it.

People’s willingness to engage with difficult tasks depends heavily on prior experiences of success with difficult tasks. Each hard thing you’ve solved becomes evidence that the next hard thing is solvable. Each struggle you’ve abandoned reinforces the belief that struggle means failure. Eccles and Wigfield’s decades of longitudinal data show this clearly across age groups, cultures, and disciplines.

Self-Understanding = f(Library of Reference Experiences)

You read this as: your capacity for self-understanding is a function of the reference experiences you’ve accumulated through prior struggles. No library, no references. No references, no recognition when the same dynamics show up in your personal life wearing different clothes.

Outsource every external struggle to a machine, and the library doesn’t just shrink. It empties.

The Pipeline Breaks Quietly

Remember the herring.

The old fish didn’t just know the routes. They understood why those routes worked. Remove them and the young fish don’t know what they’ve lost. They swim with confidence into cold water.

Professional domains are losing their experienced fish the same way. Traditional consulting and legal services depend on a leverage model where junior professionals perform routine tasks that slowly build expertise. Review enough contracts and you develop an instinct for unusual clauses. Sit through enough client meetings and you learn to read the room. As AI automates those routine tasks, the training pipeline for future experts quietly collapses. The junior associate produces the same output. They just never develop the judgment that would let them catch what the machine misclassified as standard.

But the loss extends beyond professional competence. The same pipeline that builds domain expertise also builds the reference library for personal judgment. The junior associate who never struggled through contract review also never learned what it feels like to be confident about something and then discover they were wrong. They never had to recalibrate. That experience would have transferred to every other domain of their life, including the ones that matter most. Now it’s gone.

We can express this as a supply problem:

Supply of Deep Learners = f(Perceived ROI of Hard Study)

You read this as: the number of people willing to invest in deep, difficult learning is a function of how much return they believe that investment will generate.

As perceived ROI drops, supply drops. As supply drops, the remaining deep learners become more valuable, but the lag between “nobody is studying this anymore” and “we desperately need someone who studied this” could be a decade. By then the pipeline is dry. The experienced fish are gone. The young ones are swimming into cold water with full confidence and no reference for what cold water feels like.

So What Do You Do with This

The person reading this article is probably not 19. They’re probably not deciding whether to major in physics. They’re probably somewhere in their thirties or forties or fifties, already deep into a career, already aware that something feels off when they try to navigate a difficult personal situation and reach for a framework that isn’t there.

This is for them.

The reference library doesn’t close. It’s not a window that shuts at 22. It’s a running total. Every difficult thing you take on from this point forward adds to it. The language you start learning at 40. The instrument you pick up again after 20 years. The business you build that fails and teaches you what your own failure response actually looks like. The conversation you’ve been avoiding for a decade that, when you finally have it, shows you something about yourself you couldn’t have learned any other way.

The key is that the difficulty has to be real. Not conceptual. Not simulated. Not AI-assisted. You have to actually sit with not knowing. You have to experience the specific discomfort of being bad at something you care about, and stay with it long enough to find out whether you can get better. That experience, the lived texture of it, becomes a reference point that no generated output can substitute.

Seinfeld was right. Most of the intelligence we’ve encountered in our lives has been artificial. Performed competence. Memorized talking points. Recycled frameworks presented as original thinking. AI didn’t create that problem. It just made the performance available to everyone, at scale, for free.

But the person who actually struggled through something, who built their reference library the slow and painful way, they aren’t performing. They’re drawing on real data. And when the moment comes that can’t be outsourced, the career crisis, the relationship reckoning, the identity question that won’t resolve with a prompt, they have something in the drawer.

A world that outsources every difficult external process to machines is a world that produces people with empty reference libraries. People who have never struggled through something hard enough to learn what their own struggle looks like. People who, when they finally encounter the one problem that can’t be outsourced, the problem of understanding themselves, have zero prior data to work with.

The cost of outsourced understanding isn’t that nobody will understand physics. A small, strange sliver always will. The cost is that the rest of us will arrive at the most important questions of our lives, who am I, why do I keep doing this, what do I actually want, with nothing in the drawer to consult. No reference points. No prior evidence that confusion is survivable, that struggle has a far side, that clarity comes after the suffering and not instead of it.

Your library is still open. The question is whether you’re adding to it or letting the machines do that too.

If this resonated, I write about cognitive psychology, business, and the science behind the decisions we think we're making rationally but aren't. If you want more like this, consider subscribing. If this particular topic isn't your lane, there's a growing archive that covers everything from media economics to AI bias to what common sense actually looks like under a microscope. Something in there will catch you.